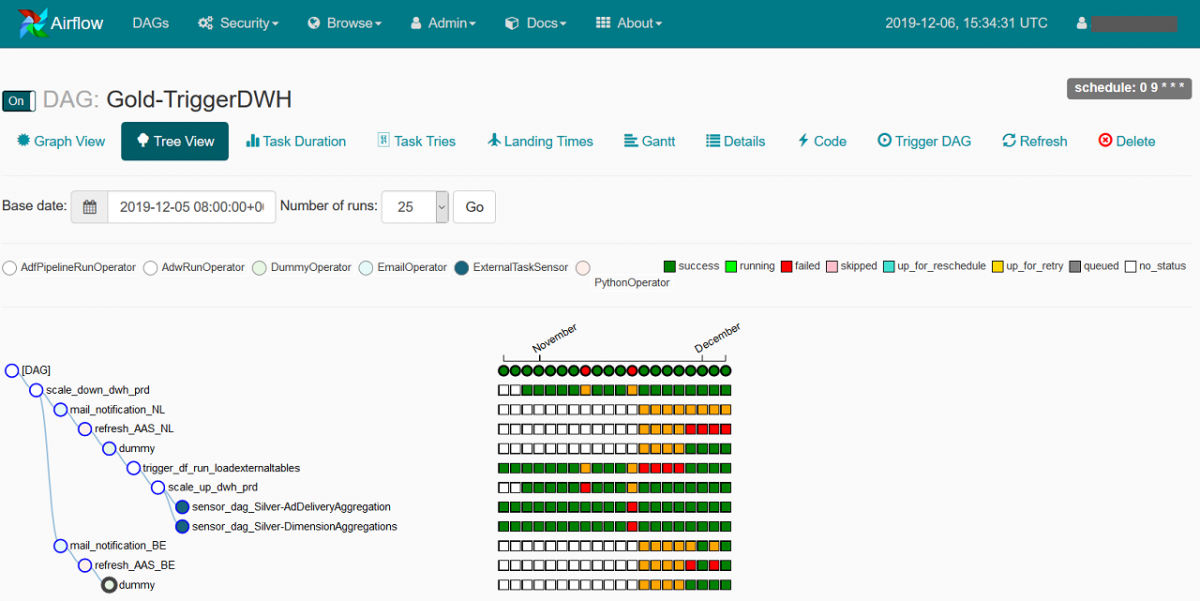

A world still ruled by DAGsĪirflow’s undisputed cornerstone is the DAG (Directed Acyclic Graph). Seven years, 52 releases, 27k GitHub stars, and 11k forks later, Airflow still relies on the same core concepts outlined in the initial 2015 post. Airflow never cared about what are the processes it was automating for Airflow, they were all tasks. This was clearly explained in the initial blog post that accompanied Airflow’s open-sourcing: it was built to automate and optimize data processes - processes that are scheduled, mission-critical, evolving, and heterogeneous. Like most other tools that were built within major tech companies, Airflow was initially designed to help with a set of use cases and data processing workflows that Airbnb had at the time. Airflow’s world: Everything is a task, and a task can be anything For Dagster, we’ll discuss the capabilities of the newly released v1 (current version: 1.0.6), and so we won’t mention concepts and traits of prior versions that are not part of the v1 release.īut before we actually compare the two tools, let’s briefly go through how each of them sees the world.For Airflow, we’ll discuss the features of version 2.3.4 (and what has been announced regarding 2.4.0), so we won’t tackle the quirks and flaws of previous versions.To do so, we’ll compare the latest versions of both platforms at the time of writing:

The aim of this article is to deliver an exhaustive comparison of the two orchestration platforms, in terms of concepts, capabilities, and adaptability with today’s modern data stack. With the above in mind, it’s definitely time to ask the question: Is Airflow still the undisputed go-to orchestrator for data pipelines, or is Dagster, the new orchestrator that’s built with all the previous points in mind, the better option? Setting the arena And so we no longer think about tasks, but we think about dbt models, Airbyte connectors, metrics, and a whole ecosystem of capabilities that are the modern incarnation of 2015’s Airflow operators we once had to write from scratch. Finally, the data stack we built for the above is moving fast and changing old patterns.Instead, we aim for self-service capabilities and automation that would allow a larger set of contributors to build data assets and push them to production. We can no longer rely on a small centralized data engineering team that builds and maintains all the DAGs.Instead, data pipelines should be written, tested, and deployed as efficiently and as fast as possible. We can no longer tolerate weeks-long development cycles to generate new data assets.Instead, we think about the quality, state, and lineage of our heterogeneous data assets. We no longer just want to run Spark jobs.On the other hand, the way we interact with data today is very different from how things were seven years ago: Thanks to its feature-rich User Interface (UI), its ability to manage a wide range of operations, and particularly its no-nonsense and intuitive approach to organizing workflows via DAGs and tasks, it quickly eclipsed existing orchestrators like Spotify’s Luigi ( which was open-sourced in 2012) and the Hadoop ecosystem’s Oozie.Īirflow is used today by data engineering teams around the world for an ever-expanding list of use cases, supported by custom operators, in-house abstractions, and a myriad of hacks to leverage some of its aging features. Ever since it was open-sourced by Airbnb back in 2015, Apache Airflow established itself as the de-facto standard for orchestration within the data space.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed